Towards Infinitely Long Neural Simulations: Self-Refining Neural Surrogate Models for Dynamical Systems

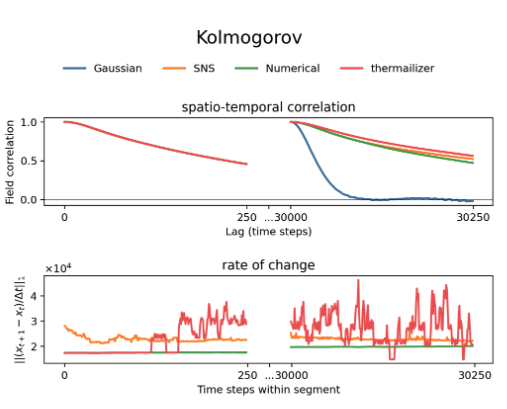

Autoregressive neural surrogate models can dramatically accelerate simulations of dynamical systems, but they often suffer from error accumulation over long time horizons. This work , led by Qi Liu, introduces a unifying framework that formalizes the trade-off between short-term accuracy and long-term consistency, which most previous approaches handled heuristically. Building on this, the authors propose a new hyperparameter-free method: Self-refining Neural Surrogate (SNS), based on conditional diffusion. SNS can iteratively refine its own predictions or enhance existing models, delivering stable and accurate simulations even over very long time scales.